On 09, Sep 2016 | No Comments | In musings, reflections from the field | By Sara Vogel, PhD.

Leigh Ann DeLyser from CSNYC brought this awesome blog post by Mark Guzdial to my attention today. It summarizes the thesis research of Yogendra Pal called, A Framework for Scaffolding to Teach Vernacular Medium Learners. It was exciting to see language being considered in conversations about CS education, so I wrote a comment replying (probably way too long, but I got excited!). It’s excerpted below…

Now that CS is going universal at the K-12 level all around the country (including in my home city, NYC), we’re going to have to think about the ways that diverse learners, among them emergent bilinguals, encounter CS, which means fields like bilingual ed and CS ed (more or less silo-ed) are going to start talking to each other. Which is exciting!

I think about touchpoints between Yogengra’s practice as you describe it, and what we in bilingual ed would call translanguaging pedagogy — the idea that learners should use their full linguistic repertoires fluidly and flexibly to make meaning in multilingual classrooms — and teachers play a role in facilitating that. The goal was deep CS learning, so videos and class materials in the various languages were, from what I can tell from your summary, helpful for different people in different ways. If the word for a certain technical term doesn’t really exist in Hindi, why bother translating into an overcomplicated word that students won’t use, when the one in English works fine and will be new for everyone? I bet walking into that classroom there’d be a range of diverse linguistic practices heard, with students drawing on English, Hindi, maybe even other languages, and programming languages as they negotiated content with each other and the professor. At least I know that’s what happened in my multilingual Scratch after-school program in the South Bronx! All bilinguals are different, exhibiting a range of competencies. Those competencies have to be incorporated, so that their language practices can be tools for meaning-making.

In my mind, good practice with emergent bilinguals is not just about providing opportunities for students to use their home language to scaffold CS tasks, but to really engage with the strengths of these students and what they bring.

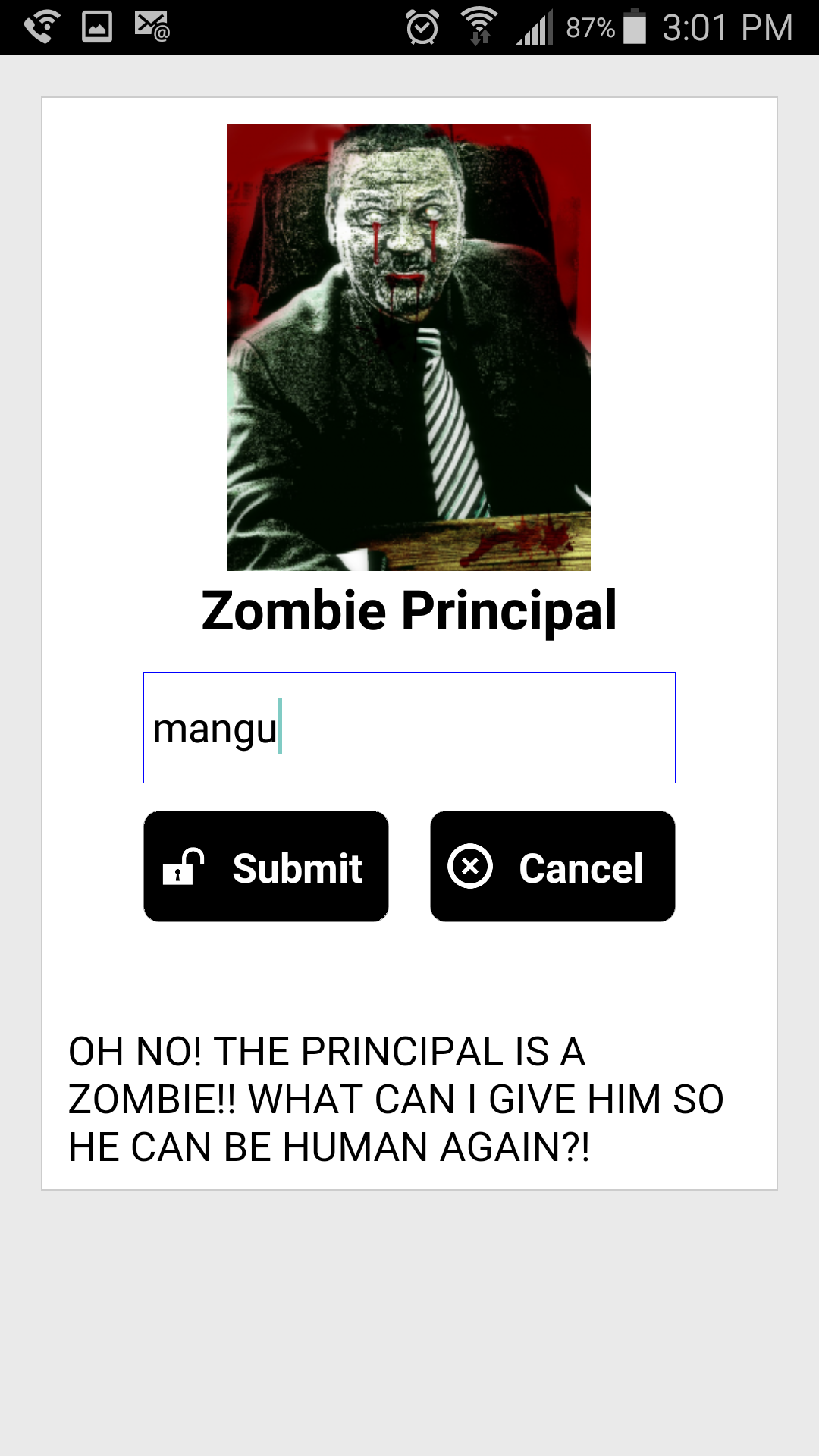

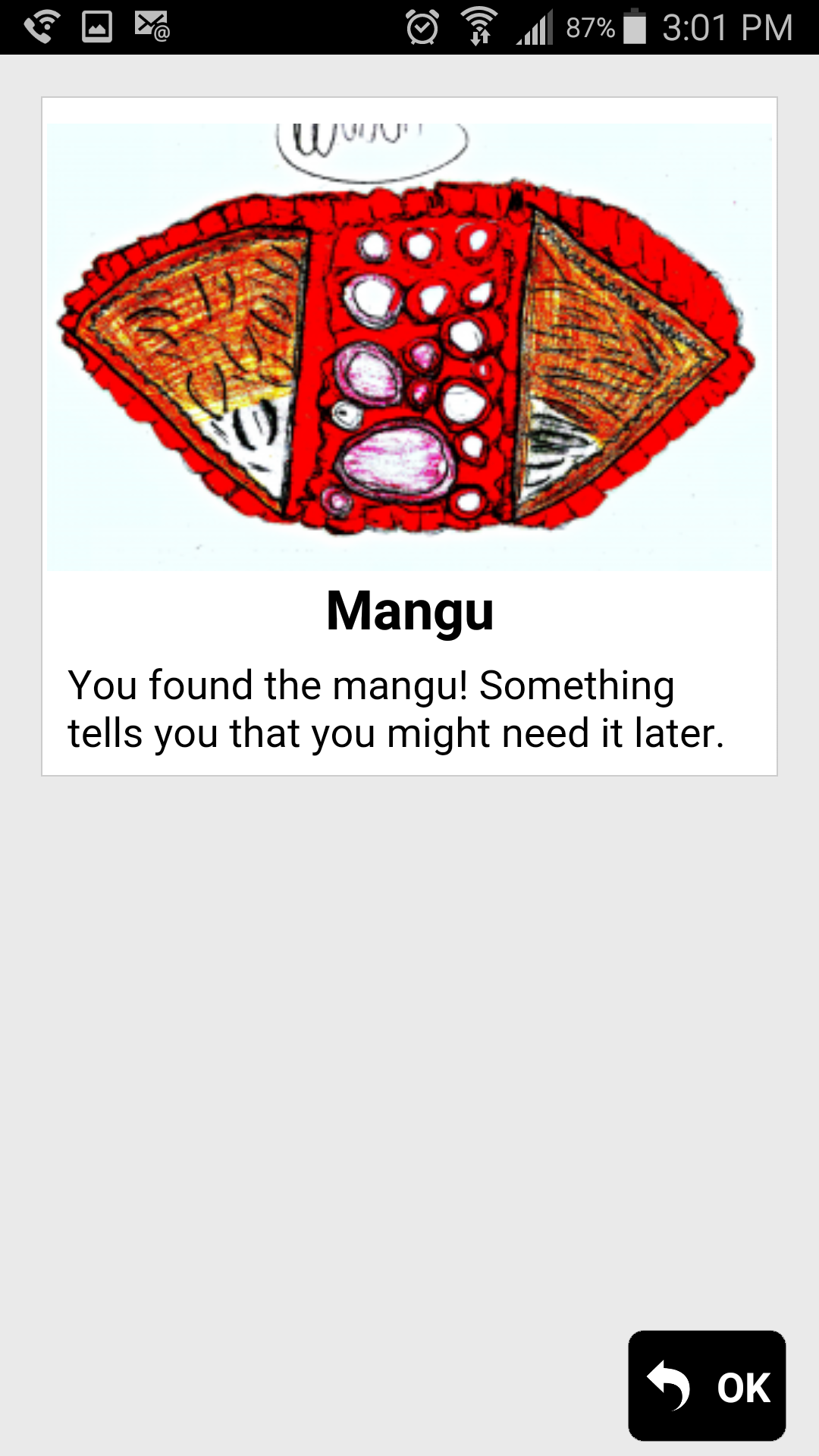

Students’ linguistic backgrounds, for instance, inform them as they imagine truly original coding projects — my students in East Harlem a couple years back designed and coded a video game with the MIT-created blocks-based software, TaleBlazer, in which you had to save the principal from turning into a zombie by giving him the traditional Dominican dish, Mangu.

Students’ linguistic backgrounds, for instance, inform them as they imagine truly original coding projects — my students in East Harlem a couple years back designed and coded a video game with the MIT-created blocks-based software, TaleBlazer, in which you had to save the principal from turning into a zombie by giving him the traditional Dominican dish, Mangu.

We have to welcome all opportunities for kids to draw on their linguistic and cultural backgrounds in and through CS for CS programming to have the most impact.

My new video series, “Teaching Bilinguals (Even if You’re Not One)”

On 02, Sep 2016 | 2 Comments | In videos | By Sara Vogel, PhD.

I’m putting together a video series about translanguaging (mostly geared towards monolingual educators) to fulfill my independent study in the Interactive Technology and Pedagogy program at the GC and as part of my work as a member of the CUNY-NYSIEB Team.

Episode 1 is below!

Watch the whole series here: https://www.cuny-nysieb.org/teaching-bilinguals-webseries/

What Facebook Knows About Me

On 26, Aug 2016 | No Comments | In musings | By Sara Vogel, PhD.

Maybe I’m late to the party on this one. But still! My dear friend Devon recently shared this fascinating (terrifying?) article about how non-journalistic content providers are cornering valuable real estate on people’s Facebook newsfeeds with biased snippets about the election. He included a link on Facebook’s website, which the New York Times had also written a short feature about, where you can take a peek at the categories Facebook uses to determine which ads you see when you idly scroll. I already knew Facebook was collecting tons of data about me in order to somehow determine the extent of the echo chamber that is my news feed. But there is something delightfully and tantalizingly navel gaze-y about ogling this page anyway. What do they know about me? Are they right about me? What in the world could they mean by “pessimism”? Is that my outlook?The questions start verging on the existential: Am I somehow this random collection of categories? The algorithm might know more about me then some casual acquaintances! Though it also has some pretty important misconceptions and major oversights. Automobiles? Really?

After a few minutes of this, I wake myself up from from marveling at and fetishizing this technology. How important is it to me that these imperfect details are out there in the world, forming my digital profile and persona? Is this all they have? Where will this data go? How is it being used?

These are all questions I ponder, as I respond to Devon, and then continue idly scrolling away.

Google Translate bot, what are your politics?

On 21, Aug 2016 | No Comments | In reflections from the field | By Sara Vogel, PhD.

Last school year, my incredible colleague at Brooklyn College, Laura Ascenzi-Moreno and I followed a 6th grade newcomer student from China and his teachers, paying attention to how they integrated Google Translate (as a translanguaging practice) into their practices to communicate with each other and learn. In multilingual classrooms, Google Translate has become such a ubiquitous tool — used not just to make sure homework assignments and notes are read by parents but in ways that, as we found, actually contribute to the relationships between teachers, students, and knowledge.

I don’t want to fetishize the role of this software — of course dynamics at schools are influenced by a myriad of interacting factors, and there were many ways that communication and expression were negotiated in this classroom. But the students’ participation as a community member, his teachers’ access to his thinking, and their conception of the students’ voice as a writer — this software was playing a role in shaping all that. Our chapter on that stuff will come out eventually and you’ll be able to read what we say.

But I’ve been thinking a lot about my dear friend Rafi Santos‘ work on Hacker Literacies — that ideologies are not just behind the media messages we consume, but are actually baked in to technologies and “sociotechnical spaces” (my favorite Rafi phrase). The code and algorithms of technologies, software, and tools, no matter how neutral they may appear, offer fair game for critique — someone made them with an intention so they are discourse (right? I’ll be taking a class on power and knowledge this semester, so I’ll get back to you on that…)

I believe in the power of educators as activists. For this, I think teachers have to be empowered in relationship to the medium AND the message.

With that in mind, I offer some approaches we bilingual educators might take:

- Sort out our feelings about machine translation. There’s not a lot of research out there on machine translation as it’s used in K-12 education. But from what I’ve read regarding its applications in higher ed foreign language departments and the field of translation, many are anxious about plagiarism and copying, people’s jobs becoming obsolete, students relying too much on computers and less on their brains, poor/garbled/artless translation. It’s worth figuring out which of these are founded and which we care about.

- Know how it works. It’s complex stuff, but just knowing the basics can help us make our claims. This piece on Medium breaks down the inner workings of machine translation software, and then, there’s this video from Google itself.

- Consider machine translation in relation to our politics and theories in bilingual education. To what extent are machine translation algorithms built with the dynamic ways people use language and translanguage, in mind? Who designs the algorithms and selects the data corpus to “feed” the machines? What human-translated texts are being “fed” into the machine? To what extent might those texts reinforce static notions of language? To what extent do they reflect colonial relationships and raciolinguistic ideologies? How might machine translation promote translanguaging and dynamic language use in our classrooms?

- Bring critical and technical conversations about machine learning, together. My classmate Achim Koh from the GC is working on a project to help academics from a variety of fields understand how machine learning is shaping their disciplines. Worth bringing people from bilingual education and linguistics in on the dialogue.

The politics of some machine learning systems — I’m thinking about you, racist Microsoft bot — have been damaging, reflecting historical systems of oppression and domination. There’s a lot at stake here. Machine translation has proven itself to be indispensable to multilingual classrooms in the 21st century. If we and our students are using these tools, we need to think critically about them. They might be potential instruments of a more linguistically fluid world, reinforce linguistic hierarchies, or more likely, have some complex role to play in the middle. Already, my adviser’s favorite metaphor for language separation (the language button on the iPhone) is breaking down with this announcement about Siri’s new translanguaging capabilities.

The machines learn from humans — humans train them. Which means our ideologies are key.

I started a blog!

On 20, Aug 2016 | No Comments | In meta | By Sara Vogel, PhD.

Tonight, my worlds collided in the form of a helpful Medium post about machine translation: tech + bilingualism. So much to say… but where to say them? Too esoteric for Facebook. Too long and involved for Twitter.

In the last few months, I’ve taken to following “The Educational Linguist,” a wonderful, thought-provoking blog by Nelson Flores, a U Penn Professor and GC Urban Ed alum. His work reminded me of my old friend, the blog!

I imagine using this space to mull over those questions that come up while I do my readings (or procrastinate doing them). To explore those juicy tidbits that surface over lunch with my wonderful classmates and colleagues at the GC. To raise the profile of those ideas and questions scrawled digitally in the comment boxes of my Google Docs.

I’ll probably talk a lot about the areas that interest me as a bilingual education techie — translanguaging, computer programming, pedagogy, social justice…

I hope this is enjoyable for any dear readers who come across it!